Article

Claude Opus 4.7 on Amazon Bedrock Is Not a Drop-In Upgrade

TL;DR:

- Treat Opus 4.7 in Bedrock as a migration, not a routine version bump. AWS changed the model and inference layer together, while Anthropic changed tokenization and runtime behavior.

- Before rollout, re-test tokenization,

max_tokensheadroom, effort settings, tool use, cost per completed task, quotas, Region coverage, and any differences between Bedrock and Anthropic's direct API.

AWS added Claude Opus 4.7 to Amazon Bedrock on April 16, 2026. If you already run Bedrock, the important change is not just the new model name. AWS changed the model and the inference layer together, and Anthropic changed tokenization and runtime behavior. Treat this as a migration, not a routine swap.

What changed

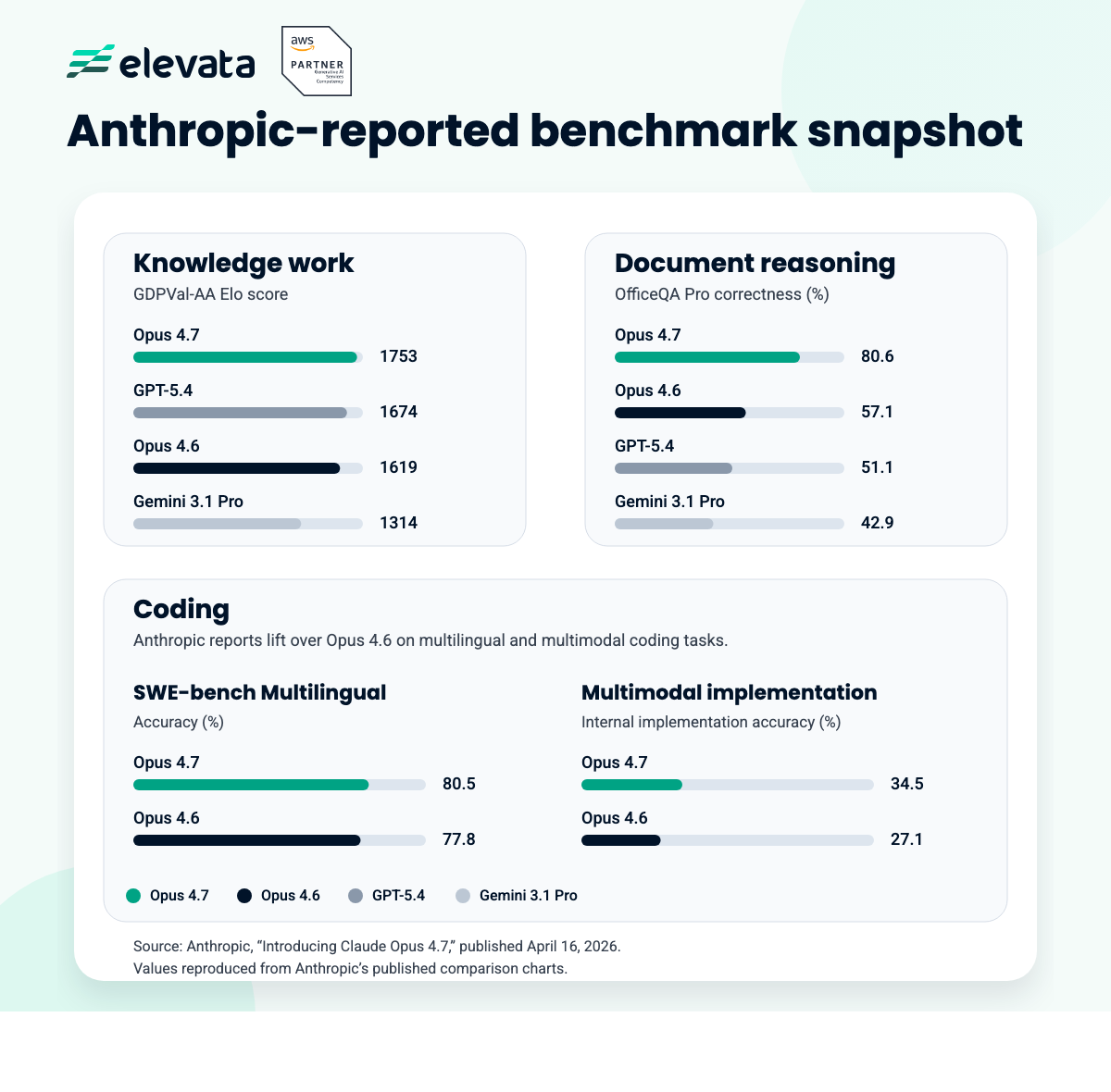

The headline features are easy enough to summarize: stronger coding, better document and research work, more stable long-context runs, and higher-resolution vision. The more important detail is AWS's own warning that teams may need prompt changes and eval-harness tweaks to get the best results. For production teams, that should be the headline.

The first thing to re-test is tokenization. Anthropic says Opus 4.7 can use roughly 1.0x to 1.35x as many text tokens as Opus 4.6, depending on the content. Same prompt, same max_tokens, different headroom. If your system compacts context, estimates tokens client-side, or runs close to limits, re-test it.

Behavior changed too. Opus 4.7 tends to use fewer tools and fewer subagents by default, and effort settings matter more than they did before. Lower effort can under-think. Higher effort can recover deeper reasoning and more tool use, but it also changes cost and latency. If your agents rely on implicit fan-out, make that behavior explicit.

If your stack spans Bedrock and Anthropic's direct API, re-test API behavior too. Anthropic removed several older controls, changed thinking defaults, and made effort settings more central to behavior. Prompt parity is not enough if runtime behavior changed underneath it.

Cost also needs a closer read than the price card suggests. Anthropic kept Opus 4.7 at the same published rates as Opus 4.6, but larger token counts, heavier reasoning, and high-resolution vision can still push cost per completed task up. List price stayed flat. Production economics may not.

Bedrock also exposes Adaptive thinking, which lets the model vary its reasoning budget with request complexity. That makes effort settings part of the evaluation, not a minor tuning step at the end.

Why the Bedrock layer matters

Opus 4.7 runs on Bedrock's next-generation inference engine, with updated scheduling and scaling logic. AWS says that should improve availability for steady workloads, provide more headroom for fast-rising demand, and queue requests under load instead of rejecting them. AWS also says prompts and responses are not visible to Anthropic or AWS operators. If privacy, compliance, or burst traffic matter in your environment, those details belong in the evaluation.

If you want to test it, Bedrock exposes Opus 4.7 in the console and Playground, through the Anthropic Messages API, the Converse API, the Invoke API, the AWS SDK, and the AWS CLI. The model ID is anthropic.claude-opus-4-7. At launch, AWS made it available in N. Virginia, Tokyo, Ireland, and Stockholm. Teams running elsewhere should check latency, data residency, and failover before assuming a clean rollout.

Where it belongs in a stack

Opus 4.7 makes the most sense where better judgment pays for itself: planning, review, multimodal verification, dense document analysis, and long-running agent loops. That does not make it the right default for every request.

In many stacks, the better pattern is to use Opus 4.7 as the planner or final reviewer and let cheaper models handle routine extraction, transformation, and control flow. Use Opus where it earns its cost.

What to re-test before rollout

- Prompts and scoring rubrics. Better reasoning usually changes response shape, assumptions, and failure modes.

- End-to-end agent runs. The gains may show up over long traces, not in isolated prompts.

- Token budgets. Recheck

max_tokens, compaction triggers, and any client-side token estimation. - Tool use and branching. If the model now calls fewer tools by default, make tool behavior explicit.

- Cost on real workloads. Re-price image-heavy, document-heavy, and long-running flows.

- Platform assumptions. Verify Region coverage, quotas, and any runtime differences between Bedrock and Anthropic's direct API.

Benchmark it on your own workload, in the Region you actually use, through the API path you intend to keep in production. Compare cost per completed task, retry rate, latency, and output quality. That will tell you more than any launch chart will.

Related

Continue reading

Related reading on this topic.

5/29/2026

12 min read

Claude Opus 4.8 Is a Benchmark Literacy Test

Continue reading

5/19/2026

8 min read

Governed AI Agent Sandbox on AWS: Architecture, MCP, and Controls

Continue reading

5/7/2026

9 min read

AWS MCP Server: Secure, Governed AWS Access for AI Agents

Continue reading